Transformers & Attention

The attention mechanism revolutionised sequence modelling. Modern transformers process entire contexts in parallel, enabling unprecedented scale and capability across language, vision, and beyond.

Read moreDeep Dive

From transformer architectures to generative models — the concepts shaping the future of AI.

The attention mechanism revolutionised sequence modelling. Modern transformers process entire contexts in parallel, enabling unprecedented scale and capability across language, vision, and beyond.

Read more

From AlphaGo to robotic manipulation, RL agents learn by interacting with environments. RLHF has become the cornerstone of aligning large language models with human preferences.

Read more

GANs pit a generator against a discriminator in an adversarial game. Despite being superseded in many domains, they remain influential in high-fidelity image synthesis and domain adaptation.

Read more

By learning to reverse a noise process, diffusion models have become the dominant paradigm for image, audio, and video generation — powering tools that have fundamentally changed creative work.

Read moreData

How the leading models stack up across standardised evaluations this year.

| Model | MMLU Score | Parameters | HumanEval | MATH | Status |

|---|---|---|---|---|---|

| Helios-Ultra DeepLogic Research | 1.8T | 91.4% | 89.7% | Top | |

| Nexus-7 Pro Anthropic Labs | 540B | 89.2% | 87.3% | New | |

| Orion-128K OpenMind AI | 405B | 86.5% | 84.1% | Open | |

| Quasar-Vision Google DeepMind | 700B | 84.0% | 82.6% | New | |

| MistralX-22B Mistral AI | 22B | 79.8% | 74.2% | Open | |

| SakuraLM-72B Sakura Intelligence | 72B | 76.1% | 71.0% | Open |

Academia

Breakthroughs from the world's leading ML research labs.

Researchers at Google DeepMind demonstrate that sparse MoE architectures can scale to ten trillion parameters while maintaining inference efficiency, unlocking new capability frontiers previously thought computationally prohibitive.

A new paradigm for AI alignment allows models to iteratively critique and revise their own outputs using a learned constitutional framework, dramatically reducing reliance on expensive human feedback pipelines.

By augmenting neural world models with structured external memory, autonomous agents can now plan effectively over horizons exceeding one million environment steps — a 100x improvement over prior approaches.

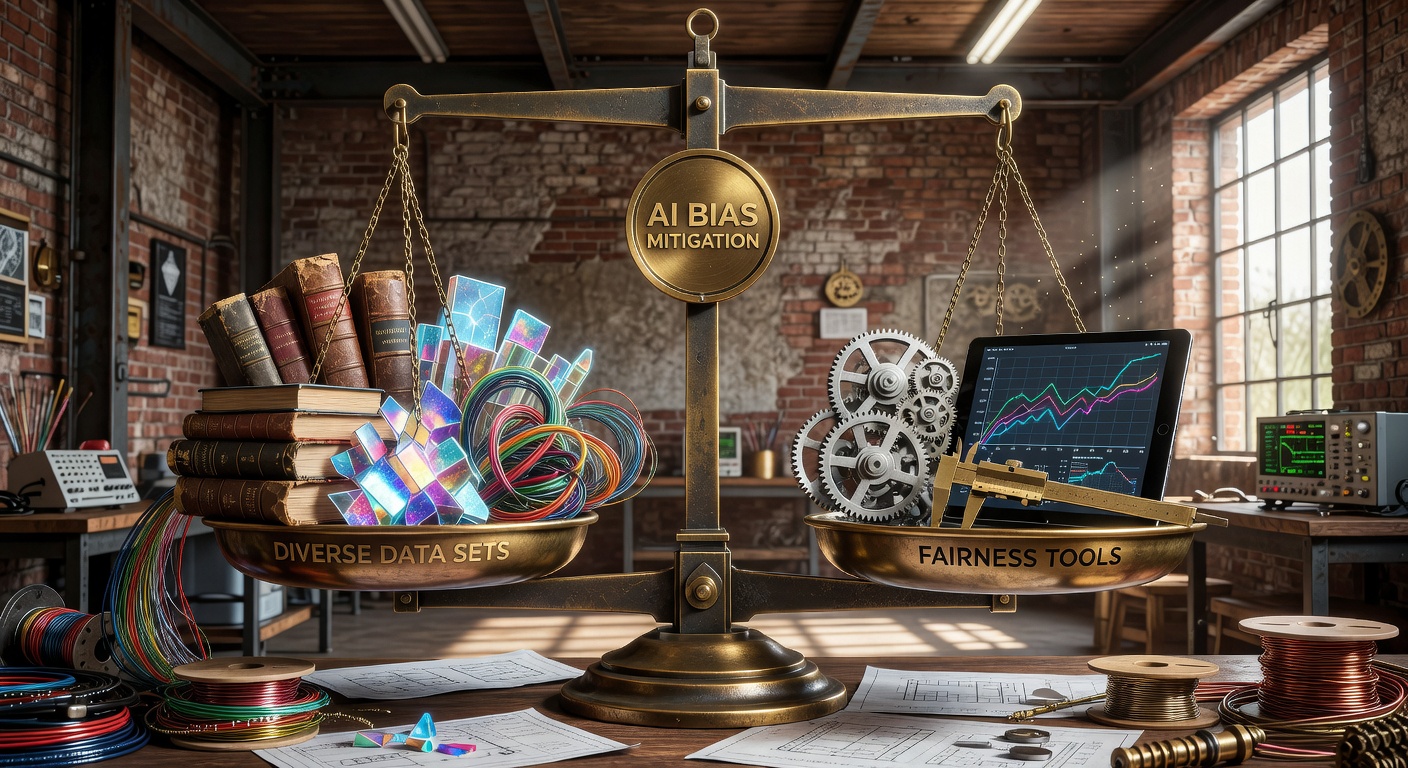

ML in Practice

The gap between benchmark performance and real-world deployment remains one of ML's most pressing challenges. Distribution shift, dataset biases, and adversarial vulnerability continue to undermine model reliability at scale.

Leading organisations are now investing heavily in bias auditing pipelines, red-teaming protocols, and interpretability tooling — recognising that a model that cannot be understood cannot be trusted.

Our in-depth series examines the engineering and ethics behind building ML systems that perform equitably across diverse populations and edge cases.